Join War on the Rocks and gain access to content trusted by policymakers, military leaders, and strategic thinkers worldwide.

On February 17, 1898, Joseph Pulitzer and William Randolph Hearst adorned the front pages of their respective newspapers – The New York World and The New York Journal – with the same sensationalist illustration depicting the explosion of USS Maine – a cruiser sent to Havana in the wake of what would become the Spanish-American War. At a time when other, more respected newspapers exercised restraint (given the unverified reasons for the cruiser’s explosion), Hearst and Pulitzer pressed on and published a fabricated telegram, which implied sabotage. While the U.S. Navy’s investigation found that the explosion was set off by an external trigger, a Spanish investigation asserted the opposite, claiming the explosion was a result of something that happened aboard the ship. Historians of journalism still debate the extent to which “yellow journalism” had influenced the investigation. No matter the cause, war broke out with the U.S. blockade of Cuba in April 1898. The American victory in the war was solidified by the Paris Treaty of 1899, which granted the United States control over Guam, Puerto Rico, and the Philippines and transformed it into a world power. The war was a turning point and had lasting effects on U.S. foreign policy over the next half century, initiating a period of external involvement driven by expanding economic and territorial interests.

As we can see from this historical episode, fake news might seem like a “new” issue, but it most certainly is not. Every communications and information revolution in history came with its own challenges in information consumption and new ways of framing, misleading, and confusing opinion. The invention of the quill pen brought bureaucratic and diplomatic writing and led to wars of official authentication (the seal). The invention of the printing press led to a war of spies and naval information brokers across the Mediterranean in the 16th century. The typewriter and telegram birthed the cryptography wars of the early 20th century.

So it goes with mass use of social media.

Fake news belongs to the same habitus of modern digital spoilers and is often discussed in tandem with trolls and bots. Most of the existing studies focus on this mischievous trio’s effects on elections and computational propaganda. Yet, we are still pretty much in the dark the extent to which fake news, trolls, and bots can draw countries into war or escalate a diplomatic crisis. Can countries with good relations be pitted against each other through computational propaganda? Or is it more effective to use these methods to escalate existing tensions between already hostile governments? The recent crisis over Qatar gives us some clues.

The crisis began on May 23, when a number of statements attributed to the Qatari emir, Sheikh Tamim Bin Hamad al-Thani, began surfacing on the Qatar News Agency – the country’s main state-run outlet. The statements were on highly inflammatory issues – namely Iran and Hamas. Once they were read in Riyadh and elsewhere in the region, members of the Saudi-dominated Gulf Cooperation Council were incensed. Although Qatari officials disowned these statements, reporting a hack of state media networks, Saudi and Emirati state-owned networks ignored the reports and pressed for a full-on condemnation of the statements. Later, both Riyadh and Abu Dhabi declared their protest and took a range of measure from closing their airspace and land access to Qatar Airlines, accusing Doha of “supporting terrorism,” and, most recently, declaring a list of 59 people and 12 groups affiliated with Qatar as “terrorists.”

On June 7, the FBI reported that Russian hackers were behind the hacking of the Qatar News Agency and that the statements attributed to the Qatari emir were planted by these hackers. Russia denied these reports. Saudi Arabia and the United Arab Emirates seemed to be unfazed by the possibility of a hack. According to Krishnadev Calamur of The Atlantic, this is for two reasons. First, the purportedly planted statements are viewed by many as the true positions of the Qatari government and have been for a long time. Second, the long build-up of tensions between Qatar and the other Gulf Arab states would have eventually exploded one way or another (indeed, they have before). Regardless, the situation continues to escalate and has even led to the Turkish government to offer its physical military support by passing a fast-tracked parliamentary bill to deploy more troops in Qatar.

This mess provides a good case study in exploring the effects of fake news and bot use during an international diplomatic crisis as well as the extent to which these digital spoilers affect crisis diplomacy.

Battle of Hashtags: Geographic Contestation of Digital Crises

Marc Owen Jones presented a good case on bot activity before and during the Gulf crisis, arguing that 20 percent of Twitter accounts that posted anti-Qatar hashtags were bots. He put forth a well-sourced case that the preparations behind the anti-Qatar digital propaganda started in mid-April, earlier than the alleged hack of the Qatar News Agency. Bot research has grown increasingly relevant in the last couple of years due to the enormous capacity of these tools to disseminate fake information on social media platforms. Fake news works by creating shock and feeding the existing belief system and emotional reality map of its intended audience. This is because sheer impossibility of immediately responding to its misleading claims in the heat of a crisis makes fake news problematic. To that end, fabricated stories don’t aim to have a lasting effect, but exploit a small window of collective attention. Although social media outlets and independent websites introduced algorithmic and self-check verification tools, propogandists adapt to the changing circumstances, improving in sophistication and thereby avoiding detection continuously.

We hear over and over that democracies may be more vulnerable to fake news, and that election periods in particular leave these countries exposed. We similarly hear that digital technologies are making democratic politics ”impossible” and even endanger democracy itself. While these concerns are not without grounding, these findings seem to reflect the simple fact that there is a disproportionate concentration of research on the effects of bots and fake news on Western democracies. We still have insufficient evidence to test whether bots and fake news have a more or less destructive impact on authoritarian systems or whether digital technologies are making authoritarianism more or less ”impossible.”

The Qatar crisis gives us an insight into how things can get far worse when fake news proliferates during crises that primarily involve authoritarian systems. Although countering digital propaganda is hard enough in open and free political systems, things get far harder in authoritarian settings. These systems limit tools and actors that might engage in checking facts, challenging narratives, and disseminating alternative frames. Although democracies do suffer from strategic surges in fake news, their ability to verify, adapt, and respond to them is superior. Information-seeking behavior is strongly embedded in social structures and grassroots politics. Authoritarian systems don’t have this asset. They heavily censor and restrict information and discourage civil society from engaging in politically relevant information-seeking behavior.

To make matters worse, political systems that are structured around single individuals tend to be overly emotional by design. An inflated sense of national pride combined with a cult of leadership offer rich soil for exaggerated emotional responses to external crises. The Qatar crisis shows us that the combination of these factors renders authoritarian states far more vulnerable to the effects of fake news proliferated through bots. It also demonstrates that when faced with a crisis, authoritarian states are more likely to make hasty decisions that end up escalating the situation into a game of chicken. The danger becomes exponentially worse as the proportion of authoritarian countries involved in a particular crisis increases. If the majority of the parties to a conflict are authoritarian, it becomes too easy for external state or non-state digital actors to push them into a continuous escalation through fake news. Without an infrastructure (cyber, democratic, or civil society-wise) to counter fake news in real-time, the danger of an armed conflict increases exponentially in diplomatic crises that feature authoritarian governments.

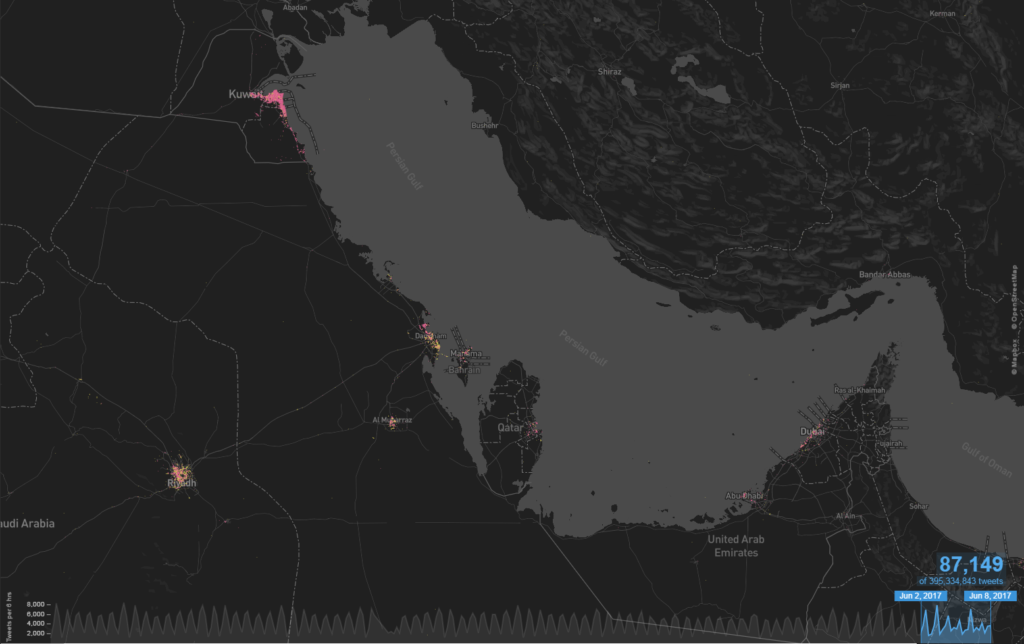

Geospatial time-frequency analysis of hashtags is a useful tool to measure crisis mobilization and escalation dynamics during digital crises. They are also good microcosms of fake news dissemination through bots. It is possible to explain bot-driven fake news dissemination in a crisis through a tandem monitoring of bot-driven hashtag proliferation on Twitter. Through focusing on the geographic and temporal diffusion of the most popular hashtags, analysts can often infer the origin point of digital spoilers and model the approximate network through which they spread. To measure the contested digital geography of the Gulf crisis, I have selected two of the most popular hashtags used between June 2 and 7: قطع_العلاقات_مع_قطر# – Cut Relations with Qatar (54,508, including variants), الشعب_الخليجي_يرفض_مقاطعه_قطر# – People of the Gulf are Refusing to Boycott (Qatar) (11,356, including variants) and used MapD engine to create time-frequency analysis. Variants imply hashtags that contained typos or shorter versions of longer hashtags.

Cumulative geographic distribution of both pro- and anti-Qatar hashtags. June 2 to 7

To measure geographic diffusion of these hashtags and their smaller variants, I have used MapD to generate a time-frequency event map of two of the most popular hashtags (without mapping variants) used through the crisis.

Time-frequency distribution of الشعب_الخليجي_يرفض_مقاطعه_قطر# – People of the Gulf are Refusing to Boycott (Qatar)

Time-frequency distribution of قطع_العلاقات_مع_قطر# – Cut Relations with Qatar

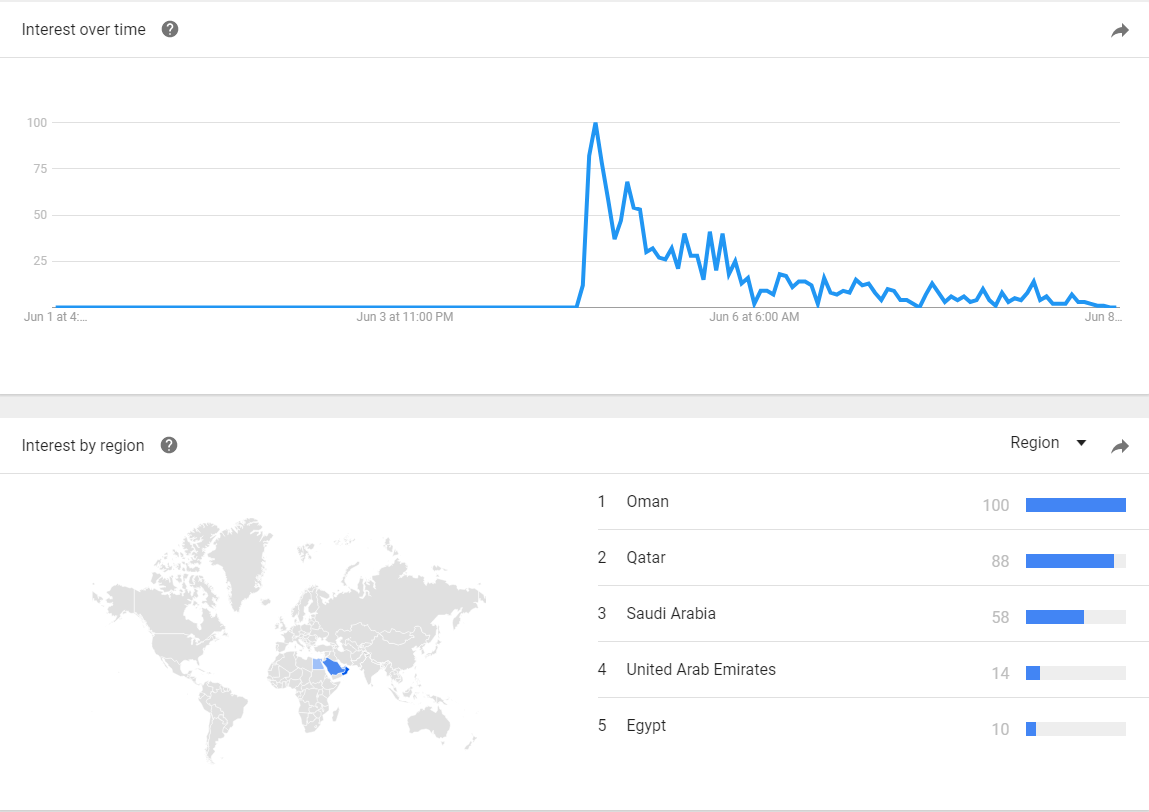

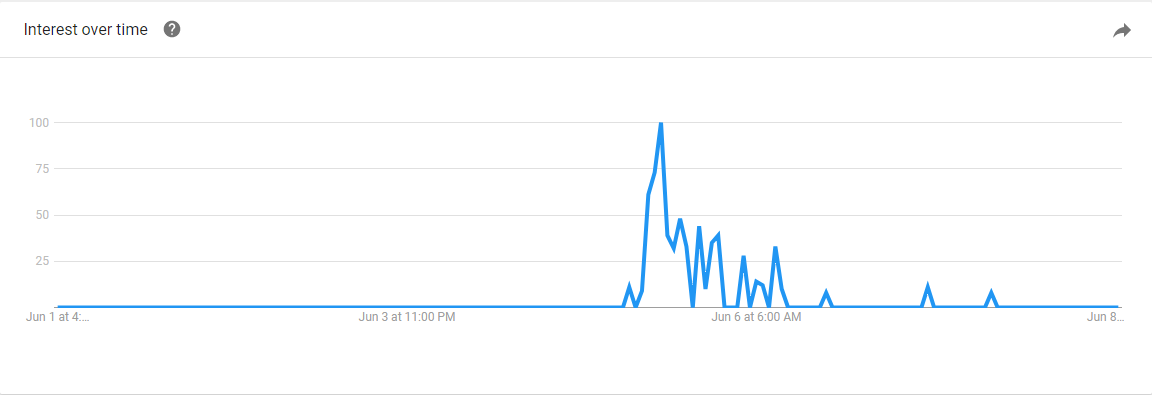

It appears that the bulk of the initial wave advocating for cutting off relations with Qatar originated in Kuwait and spread fast, suggesting heavy bot usage. The counter hashtag was protective of Qatar and increased gradually, without the kind of significant peak its rival hashtag experienced. Anti-Qatar hashtags seem to be more organized and suggest advance preparation. Similar trends can be observed in Google searches, with peak spikes in both anti-Qatar hashtags spreading faster and remaining longer in circulation, compared to the sporadic small increases in the pro-Qatar hashtag and its rapid decline.

Google Trends Data of قطع_العلاقات_مع_قطر# – Cut Relations with Qatar

Google Trends Data for – الشعب_الخليجي_يرفض_مقاطعه_قطر# – People of the Gulf are Refusing to Boycott (Qatar)

Regional interest diffusion over the Qatar crisis is identical in both hashtags, although the anti-Qatar hashtag (and other variants) spread much faster and at a higher rate. The scattered nature of these hashtags indicate randomized geolocation – a frequently recurring feature of bot accounts. The Qatari response seems to be less reliant on bots, but also demonstrates a lack of preparedness for the type of concerted challenge introduced by the anti-Qatar front.

Is it possible to know who’s behind the combined bot activity, hacks and fake news dissemination? Attribution is very hard especially with the most sophisticated of hacks. Hackers can easily add Russian or Chinese keyboard signatures or characters in the code to suggest these countries were behind such activity. Even if bots or fake news can be traced back to one geo-signature, that location may reflect VPN use or a number of masking tools.

What we can tell from the hashtag geospatial study is that the anti-Qatar digital traffic suggests advance preparation and a well-conducted centralized effort. A very large number of tweets contain the same hashtag قطع_العلاقات_مع_قطر# whereas the Qatari response is weak and sporadic, with tweets containing clustered versions of الشعب_الخليجي_يرفض_مقاطعه_قطر#, suggesting more organic proliferation. This reinforces Jones’ findings that this is indeed a crisis driven by bots and fake news with advance preparation and has little to do with whether the Qatari leader really said the statements attributed to him. Just like in the lead-up to what became the Spanish-American War, the intention to escalate was already there and the resultant information spoilers may or may not have had an effect on the decision to escalate.

The Origins of Digital Diplomatic Crises

At the time of writing this sentence, Qatari-owned Al Jazeera Media Network began reporting successive cyber-attacks on all its systems, websites, and media platforms. As with most cyber-attacks, this too will be hard to attribute, which adds fuel to international crises during escalation phases. The eventual attribution will also be unlikely to change much with regard to short-term political outcomes, as bot-transmitted fake news is used to seize the initiative and trigger emotional responses over a small window of opportunity. From this perspective, fake news in diplomatic crises brings some of the scholarship back from the Cold War, specifically on nuclear escalation, time constraints on decisions, cognitive bias, and prospect theory variants. This is especially the case with crises that include authoritarian states as their restrictive and centralizing nature render them the perfect victims for bot-driven fake news. When the fake news involved directly addresses authoritarian leaders or their families, escalations become especially likely.

Greater vulnerability of authoritarian regimes to fake-news during crises provides two alternatives to their leaders in digital diplomatic crises. Either these leaders will put self-restraint mechanisms in place, which will prevent them from taking drastic measures under digital crises, or they will adapt to the new realities of political communication and allow social verification structures to take root within civil society. While verification and fact-checking can be troublesome for authoritarian states, which themselves often appeal to the emotional response mechanisms of their citizens, they are nonetheless becoming security valves of national security in the cyber realm.

Diversionary politics of the digital space is now a structural aspect of world politics. Bots, trolls, and fake news will continue to be used by state and non-state actors alike to manipulate and distract attention economies everywhere in the world. Propaganda and psy-ops are old tricks, but the rapid changes in their methods continue to baffle less cyber-savvy countries and force them into making mistakes. Countries will paradoxically have to open up, rather than close down in the face of these tricks, both in order to bring in more technological expertise into the ranks of the government and also to harness the tools of civil society. These tools are often painfully frustrating for authoritarian governments, but nonetheless offer fast and reliable verification mechanisms that government organs can’t provide in times of high-stakes escalation.

The Qatar crisis has shown how dangerous fake news can be, not least in the context of the Gulf. Given that a fifth of the world’s oil passes through the Straits of Hormouz fake news and bots in the Qatar crisis are, in fact, global problems. With the onset of this diplomatic crisis, other countries too have to predict and plan for fake news diffusion during conflicts and diplomatic escalations and learn to operate in a system where uncertainty, confusion, and diversion become the main metric of political communication.

Akin Unver is an assistant professor of international relations at Kadir Has University in Istanbul and currently a dual research fellow at the Oxford Internet Institute and the Alan Turing Institute in London. (akin.unver@oii.ox.ac.uk)