Join War on the Rocks and gain access to content trusted by policymakers, military leaders, and strategic thinkers worldwide.

Everything is normal. Then it’s not. The world changes. In some cases, it breaks. What you thought was strong becomes brittle. What you thought was stable suddenly dissolves.

From financial markets and cyberspace to pandemics, migration, and climate change, complex systems surround us and fail in ways that separate them from traditional understandings of security challenges. As the world becomes more connected — the culmination of the promise of globalization — it becomes more complex. That complexity carries with it benefits for some and a hidden cost for all: fragility.

Understanding how complex systems fail is a critical first step towards building the capabilities and capacity required to solve 21st-century security challenges. This article defines complex systems and how they fail, and uses that framework to explore two security challenges: first, broadly defined ecology crises associated with pandemics, environment stress, population growth, and migration; and second, cyber security and the related challenge of gray zones . From this vantage point, I review the proposals for increasing national resilience in the 2020 U.S. Cyberspace Solarium Commission Report as exemplars of how to approach 21st-century security challenges.

Complex Failure

A complex system is defined by interrelationships that produce emergent complexity. The whole is worth more than the sum of its parts. Rather than look in a linear manner at individual parts of the system, analysts must assess the nonlinear whole, tracing emergence, shifting patterns of adaption, and feedback loops that can stabilize or destabilize the system over time. Equilibrium is transitory and always in flux around a threshold — a concept called bounded or deterministic chaos.

Systems tend to be resilient and adaptive, reorganizing and mutating in response to change. They can even benefit from variability and stress. At the same time, there are often thresholds after which systems struggle to cope with increasing stress and variability, and become fragile. Once you cross this threshold, the system changes — often in a dramatic fashion.

Complex systems usually contain flaws that stress events or sudden shifts exacerbate. For example, the COVID-19 pandemic didn’t create mistrust in the U.S. federal government or an erosion in its ability to mobilize and deploy resources. Systems, like humans, are inherently flawed and adaptive.

Failure, therefore, is rarely a function of a single shock event. Fragility arises from a combination of factors that interact in unpredictable ways. In the 1990s, Richard Cook outlined 18 treatises on why systems fail, highlighting that catastrophe — true system collapse — usually arises from multiple failures. There is rarely one cause. Multiple events combine to shift an otherwise functioning public health system or economy into a death spiral that threatens global supply chains. Failure in one part of the system can cascade into other parts — such as how the current pandemic stresses public health — and, through our response, ripples into the economy, causing mass layoffs and a collapse in consumer confidence, trade, and industrial production. It also explains sudden, dramatic state failure similar to that seen at the end of the Cold War in multiple countries where the removal of U.S. and Soviet patronage triggered larger governance issues linked to deep colonial legacies.

Human cognition and perception further compound failure. Chris Clearfield and Andras Tilcsik argue in Meltdown that the increasingly intricate relationships that define our world create a situation in which humans struggle to comprehend complexity. The intuitive stories we tell ourselves about cause and effect, or the search for single points of failure, further push us into the abyss. We default to a search for monocausal explanations that simplify more for than clarify. We miss a tragic truth. Fragility is often hidden. You cannot predict failure in advance with any degree of certainty complicating planning and policy.

Seeing the world as interconnected systems changes how we think about national security. Robert Jervis used systems thinking to reexamine international politics, using concepts like emergence to describe the crisis politics of the Cold War and the escalation of the First World War. Clausewitz’s emphasis on fog, friction, and uncertainty based on the interactions of the play of chance, violence, and rational planning showed that makes war itself is an emergent system whose character changes overtime.

Fragility and Human Security

Pandemics are one among multiple classes of complex system failures on the horizon in the 21st century. These vectors are best understood through the concept of human security: prioritizing local and communal security concerns over traditional national security. Two human security areas warrant deeper consideration to imagine the current and future crises: the environment and global population growth and distribution. Tragically, both will interact in a way that increases the probability of future pandemics.

Current concerns about climate change and how it could produce cascading failures in social, economic, and political systems are not unwarranted. Environmental factors often exert an indirect effect that amplifies other stressors. In Geoffrey Parker’s new history of the 17th century, Global Crisis, he charts how the little ice age — a rapid cooling that took place over years — set the conditions for the Thirty Years War as well as the collapse of multiple empires. As crop yields declined, populations migrated, stressing the carrying capacity of local governments and cascading into wider political upheaval.

This pattern is also on display more recently in the Middle East and Africa. In Syria, a once-in-a-half-millennia drought preceded the civil war, leading to patterns of internal and external migration that prolong the conflict and complicate resolution. In West Africa, overfishing is one of the major variables pushing thousands to risk passage to Europe via illicit migration networks. As local fishermen lose their jobs, the broader economy suffers and collides with social status.

The current pandemic is not the first pattern of global disease to induce system failure and catastrophic collapse. In his book 1491, Charles Mann charts how European diseases devastated indigenous peoples in the Americas and changed patterns of settlement and colonization. In Mosquito Empires, J.R. McNeil shows how disease patterns in the great Caribbean altered great-power competition in the Americas.

Preparing for pandemics, migration, climate change, governance failures, and other human security challenges will likely become a persistent requirement in the 21st century. You don’t prepare for these challenges by buying additional aircraft carriers. You prepare by hedging: making multiple small investments oriented to prevent, mitigate, and manage the consequences of system failure. In other words, you buy down future risk and increase the likelihood you can stabilize a collapsing system. You stockpile medical equipment. You ensure sufficient medical capacity to scale up and meet a future crisis. You build in continuity of the economy and government procedures at the local, state, and federal levels, and test them periodically against a range of possible system collapse events that eclipse a narrow focus on great-power competition and nuclear exchange.

Fragility and Cyber Security

Fragility is also found in worlds that humans create. The myriad network connections across physical, logical, and cyber-persona layers that constitute cyberspace represent one of the most complex and critical networks upon which we all rely. These connections are extensions of our social, political, and economic lives. States and non-state actors from terrorists to criminals exploit these connections, often seeking a way short of conflict to spy on, coerce, or extort rivals.

Like other complex systems, cyberspace is relatively stable but risks cascading failures that ripple beyond the internet to affect our daily lives. Russia’s 2017 NotPetya attack cascaded through the global economy. Groups routinely use, trade, and sell network exploits from previous attacks, commoditizing malware and leading to “crime-as-service” marketplaces on the dark web. Cyber exchanges between states — covert action for a connected age — create entirely new security challenges and threat vectors. Former state cyber operators and spies sell their expertise to the highest bidder. Authoritarian regimes exploit the marketplace to wage cyber repression campaigns against their own citizens. States like Russia conduct both white and black propaganda through social media, undermining trust in public institutions and even the entire legitimacy of election systems.

Put bluntly, there is a positive feedback loop increasing the severity and frequency of cyber operations that risks breaking cyberspace, further polarizing societies, and reducing economic growth and prosperity in the 21st century. Like the pandemic, failure will be sudden and sharp but difficult to predict in advance. The system could continue to erode, operating at a degraded level until crossing a critical threshold.

This logic of system failure helps explain how other complex systems could unravel. As the senior research director for the U.S. Cyberspace Solarium Commission, I used concepts from system theory to develop the stress tests we used to evaluate competing strategies for securing cyberspace. The commission assessed each strategy against two scenarios: Slow Burn and Break Glass. The Slow Burn scenario reflected the process of system erosion to the point of failure with an escalating series of cyber events over the course of a year. The Break Glass scenario explored sudden, systemic failure. The commission weighed how well each strategic approach (shape behavior, deny benefits, and impose costs) could prevent, manage, and mitigate the effects of the cascading series of failures captured in the crisis scenario.

This vetting process meant that the final report captured elements of each original strategic approach but tended to adopt a posture best captured by the concept of deterrence by denial with a twist. No policy will stop all cyber attacks, just as no policy will ever stop all espionage. Rather, the goal was to reduce the severity and frequency of attacks — degrade the feedback loop — and create internal resiliencies, including public-private partnerships, that made it harder to hold the United States hostage in cyberspace while also moderating the concept the “defend forward” to limit escalation risks. This approach was supported by parallel studies on the limits of offense in cyberspace and unique crisis dynamics associated with cyber operations.

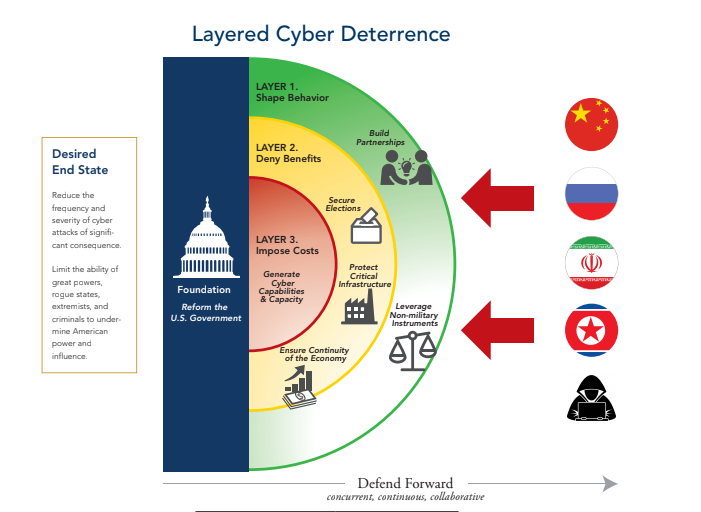

The majority of the resulting proposals that made their way into the final report reflect this logic and its overall strategy: layered cyber deterrence. The strategy seeks to stabilize the current system through the coordination of activities across three layers, each with their own respective hedges for limiting the severity and frequency of cyber attacks. The graphic below depicts this strategy.

Image from the U.S. Cyberspace Solarium Report

In the “shape behavior” layer, the United States works with partners and allies to promote responsible norms of behavior in cyberspace. Systems exist as both material relationships and ideas. Those ideas help bound behavior.

In the “deny benefits” layer, the policy recommendations, as hedges, seek to reshape the cyber ecosystem, building in additional resilience and adaptability. The recommendations include incentives for public-private sector collaboration and creating the type of hedges against catastrophic failure required to establish continuity of the economy and government while protecting critical infrastructure.

In the “impose costs” layer, the policy recommendations augment existing strategies that call for defending forward. This concept is more akin to Cold War-era forward defense, albeit in cyberspace, than to the calls for persistent engagement and seizing the initiative. Bold offensive action in cyberspace, outside carefully calibrated instances, is dangerous and risks exacerbating system failure. Rather, the U.S. government ensures it has the capability and capacity to respond at a time and place of its choosing using all instruments of power — not just cyber — and in a manner consistent with international law. This stance reflects what Schelling called the diplomacy of violence: You use the threat of cyber operations and demonstrated capabilities to shape behavior as opposed to haphazardly using brute force online to break things and claim hollow, often fleeting victories.

Resilience and 21st Century Security

Complex system failure will be the defining risk of the 21st century. From pandemics to cyberspace, the shock will emerge suddenly and quickly cascade to affect people around the world. The central question then is how to prevent systems from failing when the nature of complex systems make such forecasts almost impossible.

The answer is simple: Build in resilience as a hedge against future shocks. This foundational idea has profound implications for how we think about security, articulate strategy, and develop policies for securing the future. It inverts the old mantra that says the best defense is a good offense. When the goal is to stabilize the system, the best offense is a good defense. Societies at large must prepare to respond to crises before they occur, building in the adaptability and resilience required to pick up the pieces when the system falls apart. It is not just a job for government, though the federal government plays a critical role.

I have written in the past about complex systems and using them to think about the character of war in War on the Rocks. Yet, what struck me throughout the Solarium process was the importance of systems thinking beyond warfare. Strategic competition today takes place in gray zones and involves shaping behavior through economic statecraft, cyberspace, and a relentless war of ideas and disinformation. Military power supports this competition, but it is no longer the defining contest of wills. As Russian strategic theory highlights, the correlation of non-military to military measures is 4:1 and utility of force may be declining. Coercion takes on novel forms and creates new competition vectors such as a contest over technology standards and who wins the global 5G race — authoritarian or democratic regimes.

Beyond great-power competition, systems like the environment and cyberspace represent public goods whose fragility affects us all. Public goods are often subject to a tragedy of the commons in which individual behavior can cascade and threaten the entire system. As seen in the Solarium event used to assess competing strategies and articulated in the resulting policies, the future calls for more stress testing and red teaming to identify risk vectors and coordinate hedge investments that prevent, manage, or mitigate the system’s fragility.

Most importantly, and only partially captured in the U.S. Cyberspace Solarium Commission report, illuminating how complex systems fail requires increased investments in collecting information, modeling risk, and conducting competitive strategy exercises like Solarium. For the good of everyone who uses cyberspace, the world needs a Bureau of Cyber Statistics just as we have already invested in government agencies and international bodies to report on economic statistics, climate change, pandemics, and migration. Missing from the report, but equally critical, is the need to stand up new research initiatives that evaluate systemic risk. The model for this effort remains the political instability task force. Efforts like this should be expanded to look at broadly defined systemic risks like cyberspace, climate change, migration, and other pressing human security challenges. A cyber instability task force represents an important step in the right direction.

Making small investments in efforts like the U.S. Cyberspace Solarium Commission are hedges against future shocks. They don’t stop failure, but they help policymakers and citizens visualize and describe alternative futures in a manner that supports the planning and building of defense and resilience into the system. They offer forums to build the types of public-private sector and cross-government bridges required to solve 21st-century security challenges.

Benjamin Jensen holds a dual appointment as a professor of strategic studies at the Marine Corps University School of Advanced Warfighting and as a scholar-in-residence at American University, School of International Service. He is also a senior fellow at the Atlantic Council and an officer in the U.S. Army Reserve 75th Innovation Command. He recently served as the senior research director and lead writer for the U.S. Cyberspace Solarium Commission. The views expressed are his own.

Image: U.S. Marine Corps (Photo by Lance Cpl. Jaime Reyes)